Quantum computing—magnificent in conception though embryonic in performance—is being touted as the next great information-technology revolution.

Enthusiasts are predicting that quantum machines will solve problems beyond the reach of conventional computers, transforming everything from medical research to the concepts of space and time.

Meanwhile, the actual quantum computers being tested in university and corporate laboratories are mostly exotic divas that run at temperatures colder than intergalactic space and crash in milliseconds if intruded on by the outside world.

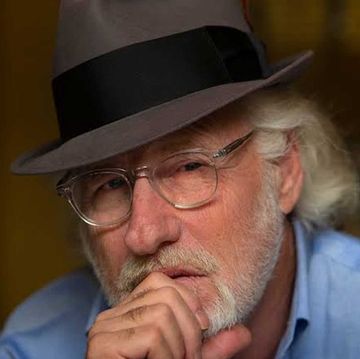

“I worry a lot about the hype,” John Preskill told me recently as we chatted in his office in the gleaming, glass-shrouded building at the California Institute of Technology in Pasadena where his Institute for Quantum Information does its weird work. A long-sighted physicist in the tradition of Caltech’s Richard Feynman, Preskill is a leading advocate of quantum computing. But even he pooh-poohs the idea that quantum computers will soon replace our laptops.

“Everybody believes it, but nobody can prove it,” he said. “Changing everything in 10 years is not realistic.”

Such reservations haven’t kept governments and the private sector from betting that visionaries like Preskill—and like China’s Pan Jian-Wei, known in his homeland as the “father of quantum”—will succeed. The Chinese government is reportedly investing $11 billion in developing quantum computers and quantum-ready networks. The U.S. government and the European Union are in for more than a billion dollars each. IBM has put a rudimentary quantum computer online, complete with tutorials on how to frame questions it can understand. (Sample instruction: “Apply a Hadamard gate to q[0] by dragging and dropping the H gate onto the q[0] line.”) Amazon’s cloud-computing services now include access to quantum computers operated by IonQ, D-Wave Systems, and the Berkeley chipmaker Rigetti Computing. Google claims to have attained “quantum supremacy,” a term Preskill coined for the ability to solve problems no conventional computer can handle.

Google’s facility sits inconspicuously in an aging industrial park near the Santa Barbara airport. It’s identified only by a bumper sticker on the glass front door. Inside, I was shown five quantum computers, all humming away. Each was housed in a giant thermos hung on chains to minimize vibrations from the ground. Dozens of reedy silver coaxial cables fed into each computer, conveying microwave pulses through its quantum chip and back to the dozens of scientists hunched over display terminals in the next room. Google research scientist Erik Lucero told me that the team’s goal is to make quantum computers practical, then “give them to the world.”

The world could use them.

Ordinary computer chips are approaching their theoretical limits. They’ve been getting smaller and faster for decades, but their millions of tiny transistors cannot be shrunk much more without running into interference from—ironically enough—the quantum fluctuations that pervade the universe on submicroscopic scales.

Conventional computers also raise environmental concerns. The global information-technology sector, growing by 3 percent a year, already spews as much greenhouse gas emissions as the airlines do. Supercomputers that gobble up more electricity than 10,000 homes are starting to look as antiquated as steam locomotives, considering that a quantum chip about the size of a postage stamp could, in theory, do more in seconds than a supercomputer could accomplish in a thousand years. Quantum’s greener.

Long-term prospects aside, there’s a hardball motive for investing in quantum computing right now: if you don’t, somebody else may get there first.

Consider encryption. Today’s commercial and military encryption systems were designed to foil conventional—not quantum—computers. In the popular public-key encryption system, each financial transaction is identified by a “public” number, generated by multiplying two primes. Cracking the code requires determining which two prime numbers were multiplied, a task that would take a conventional computer billions of years to accomplish.

Such cryptography systems, immune to brute-force decoding because doing so would take too long, seemed pretty secure until Peter Shor came along.

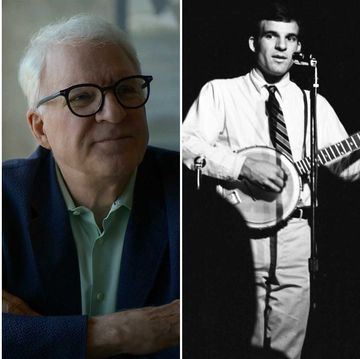

Shor, a graduate of Marin County’s Tamalpais High School and Caltech who went on to win the Gödel Prize in theoretical computer science, demonstrated in 1994 that a proper quantum computer could break public-key encryptions in a matter of seconds. As a recent National Academy of Sciences report rather dryly put it, Shor’s algorithm sparked “strong commercial interest in deploying post-quantum cryptography well before such a quantum computer has been built.”

The challenge is starkly clear. Build a fully functional quantum computer first, and you might crack the other side’s codes before they can crack yours. Miss out, and you’re toast.

The code-busting potential of quantum computing has not been lost on the Chinese government, which last November passed a law threatening to “punish” any private corporations employing ciphers the authorities can’t break. Much of the money China has earmarked for quantum computer research is said to be going toward deploying computer networks designed to resist quantum intrusion. Chinese researchers are experimenting with quantum-encoding techniques to create messages that cannot be eavesdropped on without the recipient seeing evidence of it. In one such test, on September 29, 2017, Pan Jian-Wei and his colleagues dispatched a quantum-encrypted key from an orbiting satellite to Vienna and Beijing.

QUEST FOR QUBITS

Conventional digital computers manipulate binary digits, or bits—the zeros and ones that, as Alan Turing proved in 1936, can in principle replicate anything in the universe. Visions of a “universal” computer, which a century earlier had so enchanted the mathematician Charles Babbage that he came to be regarded as a raving crank, grew into today’s digital world with its five billion people using mobile phones.

Quantum computers, too, use bits to communicate with the outside world. But inside their quantum world, they employ what are called quantum bits, or qubits.

A single qubit, such as an isolated atom or electron, can generate only a single, on-or-off, zero-or-one state—just like each transistor on a conventional chip. The magic of qubits resides in their ability to be combined—“entangled,” in the jargon—with one another, so their many qubits start working together. Entangled qubits scale up exponentially: A 4-qubit quantum computer has not 4 but 16 times the power of a 1-qubit machine. A reliable 300-qubit quantum-computing chip could outperform a conventional computer the size of the observable universe.

The current state of the art is somewhere between IBM’s 50-qubit Q System One—a black-lacquer showpiece encased in a nine-foot borosilicate glass cube—and a 72-qubit machine being tested by Google. The Google machine said to have attained quantum supremacy employs a 53-qubit chip. (It was built to run 54 qubits but one never worked, so the researchers went with what they had.)

Most such machines are what Preskill calls noisy intermediate-scale quantum systems, or NISQs. They’re “noisy,” he notes, in that researchers “have imperfect control” over their qubits. They’re “intermediate” because properly controlling their 50 or so qubits would produce more power than any existing supercomputer but still fall short of quantum computing’s potential. Until the noise can be significantly reduced, Preskill predicts, “quantum computers with 50 to 100 qubits may be able to perform tasks which surpass the capabilities of today’s classical digital computers but…will not change the world right away.”

NISQ qubits are typically made in superconducting circuits, each a tiny oval racecourse interrupted by a single barrier called a Josephson junction. To build such a NISQ, pack a bunch of Josephson junctions close together, to encourage them to entangle, and chill them to nearly absolute zero in your laboratory thermos. Electrons will circle each racecourse ceaselessly, going in both directions at once, quantum-leaping through the barriers to create a single entity with an enormous calculating potential.

Once that’s happening, hit your supercooled chip with a shaped microwave pulse. The pulse excites the quantum system, which responds by exploring its vast internal space of possible futures, canceling out those that exclude one another and delivering the result as an output pulse. Repeat the process, sifting out noise, until the computation is complete.

In a typical quantum computer, such Q-and-A events can take place a trillion times a second.

Entanglement is fragile. Anything from heat to cosmic rays to an overzealous input pulse can wreck it. But it’s so promising that it’s been called “a physical resource, like energy,” and its exploitation an industry.

Preskill characterizes his research as exploring “the entanglement frontier.”

WEIRD QUEST

The fact that much more goes on inside a quantum system than can ever be detected was established in the mid-1920s by the physicist Werner Heisenberg. Dubbed “uncertainty,” it was long regarded as a limitation on human knowledge. The uncertainty principle means, for instance, that the more one learns about a quantum particle’s velocity, the less can be known about its location. This is the basis of the joke in which Heisenberg, pulled over for speeding by a cop who tells him, “You were going 90 miles an hour,” replies, “Thanks a lot. Now I have no idea where I am.”

But by the 1980s, as personal computers were becoming commonplace, scientists started to think about the other side of the Heisenberg coin. They speculated that the vast internal states of quantum systems might be put to work for computing. It wouldn’t matter that a quantum chip’s internal deliberations cannot be observed; what mattered was that they might deliver accurate results to the outer world. Since quanta are how nature works, the answers would be coming, so to speak, from the horse’s mouth. Suddenly, Heisenberg’s quantum uncertainty began to look less like a limitation than a resource.

Richard Feynman had started exploring the prospect of quantum computing decades earlier. “When our computers get faster and faster and more and more elaborate,” he predicted in 1959, “we will have to make them smaller and smaller. But there is plenty of room to make them smaller.”

Preskill, Caltech’s Richard P. Feynman Professor of Theoretical Physics, has something of Feynman’s sense of humor—responding to a Twitter poll, he said he became a scientist because “I don’t mind being confused most of the time”—and something of his showmanship. Preskill kicked off a black-tie celebration of Feynman’s legacy a few years back by singing an ode to quantum computing that he’d written to the tune of South Pacific’s “Some Enchanted Evening”:

Quantum’s inviting

Just as Feynman knew.

The future’s exciting

If we see it through!

Once we have dreamt it

We can make it so.

Once we have dreamt it

We can make it so!

Preskill readily rattles off potential practical benefits of quantum computing—from more efficient solar cells to quantum-entangled space telescopes orbiting the sun—but a scientist of his stature doesn’t devote decades to a subject just to stimulate spin-offs. Preskill wants to use quantum computers to simulate nature itself, investigating realms of reality beyond the reach of observation and experiment.

PROBING SPACE AND TIME

Quantum physics, discovered by Max Planck in 1900 and largely defined by 1930, revealed that the fundamental building blocks of nature are not particles or waves but quanta. (Quanta are the irreducible packets of information that can be extracted from any process.)

Generations of theoretical work and laboratory experiments have confirmed the validity of the quantum approach. As the Caltech physicist Sean Carroll writes, “nature is quantum from the start. Quantum mechanics isn’t just an approximation of the truth: It is the truth.”

There is, however, a conspicuous gap in quantum theory: gravity, the force that gathered together the incoherent masses emerging from the big bang to make galaxies, stars, and the planet we live on. Exquisitely accurate quantum theories account for the behavior of the other three fundamental forces—electromagnetism and the strong and weak nuclear forces—but gravity is much too weak to play a significant role in most laboratory experiments. Using existing technology to probe quantum gravity would require constructing a particle collider the size of the solar system. Experiments conducted at the edge of a black hole might be fruitful, but the nearest black hole is 3,000 light-years from Earth.

“Quantum gravity is hard,” Preskill notes, “because you can’t do experiments.”

It might be possible, though, to use quantum computers to simulate how gravity works. Einstein having shown that gravity curves space, and that space and time are two aspects of the same phenomenon, quantum simulations could lay bare the nature of space and time.

Simulations using conventional computers are already widely successful. Formula One race drivers put in long hours on simulators before getting to the track, and commercial pilots use simulators to acquaint themselves with new models of aircraft. But a conventional computer can’t even simulate the behavior of a hundred atoms for a millionth of a second, much less that of quanta roiling at the edge of a black hole. The only known way to get quantum is to go quantum.

Quantum computers have the advantage of working in the same way as the systems they’d be simulating. As Feynman argued in 1982, “Nature isn’t classical, dammit, and if you want to make a simulation of nature, you’d better make it quantum mechanical.

“By golly it’s a wonderful problem,” he added, “because it doesn’t look so easy.”

The scientific potential of using quantum computers to simulate quantum gravity can be summarized by a single, rather astounding fact: any quantum system can simulate any other quantum system, provided it has at least as many qubits as the system being simulated.

As Preskill puts it, a quantum computer using enough entangled qubits could “simulate efficiently any physical process that occurs in nature.” He expects such simulations to reveal the deeper quantum process that generates space and time. “Space-time comes from the emergent properties of this underlying system,” he asserts.

What is understood can be controlled—although this isn’t always obvious at first. Einstein discovered that enormous amounts of energy are locked inside atoms, but he thought it unlikely that the energy could ever be extracted to generate power. Today, nuclear power generates roughly 14 percent of the world’s electricity. Electrons were once regarded as so utterly exotic that physicists at a 1911 annual dinner toasted, “To the electron! May it never be of any use to anybody!” Yet so many uses were found that the global electronics industry is currently valued at over a trillion dollars.

What, then, might an understanding of quantum space-time enable humans to do?

Preskill expects that it might become possible to “create new worlds.”

“I really believe this is going to happen,” he said in his Caltech office, leaning back and smiling pleasantly, as one might expect of a would-be creator of universes.

Timothy Ferris is an emeritus professor at UC Berkeley and the author of a dozen books, among them Seeing in the Dark and Coming of Age in the Milky Way. He produced the Golden Record, an artifact of human music and other sounds of Earth launched aboard the twin Voyager interstellar spacecraft now exiting our solar system.

HOW QUANTUM COMPUTING WORKSQuantum computers work by accessing the complex internal states of quantum entities—which in the most promising current systems are supercooled electrons. |

CLASSICAL COMPUTER BITOrdinary computers store data and perform computations as a series of bits that are either 1 or 0. | QUBITBy contrast, a quantum computer uses qubits—the internal states of quantum systems, which can be 1 and 0 at the same time—to do the calculating. |

QUANTUM SUPREMACY |

Entangling multiple quantum systems increases their power exponentially. Two qubits can have four states. | With three qubits, it’s eight. | With four qubits, it’s sixteen. |

A 100-qubit quantum computer, if one can be built, would outperform a conventional computer the size of planet Earth. A 300-qubit machine might do better than an ordinary computer made out of every atom in the observable universe. That kind of computing power could model complex molecules and other quantum systems, making it possible to learn how quantum gravity might behave. Google’s quantum-supremacy experiment used a 53-qubit chip to do, in three minutes and 20 seconds, what a conventional computer might accomplish in days, months, or thousands of years. COHERENCETo function properly, a quantum chip’s entangled electrons or other particles must be kept “coherent” by isolating them from interference from the outer world. Most of the quantum computers currently at the performance forefront are kept cold and dark to discourage outside interference that would otherwise cause them to “decohere,” wiping out their calculations. Running a quantum computer involves sending microwave pulses to the chip—each pulse exciting the entangled quantum system into producing an answering pulse that delivers the results of its calculations—without decohering it. In practice this means hitting the chip with millions of pulses per second, riding on the edge of decoherence, and doing the same calculation multiple times to enable a signal to emerge from the noise. Much better control over the quantum systems will be required to reduce the noise before quantum computers become fully operational. |

STANDING ON THE BRINK OF CHANGE?

Computer science is no more about computers than astronomy is about telescopes.

—Edsger Dijkstra

In 1488, the artist Leonardo da Vinci sketched a flying machine. His 1505 Codex on the Flight of Birds advanced the idea that human flight should be possible. After all, birds can fly. Should it not be possible for some kind of device—perhaps with larger wings and some kind of superhuman energy to power it—to allow humans to fly, too?

But despite Leonardo’s profound insight and engineering genius, nobody could get the idea to work for some 400 years—not until 1903, when two bicycle mechanics constructed a flying machine.

Some 30 years later, Charles Lindbergh flew across the Atlantic. And some 30 years after that, thousands of people were crossing oceans and continents, sipping cocktails, and complaining about the movie.

Once the tipping point was reached, progress accelerated dramatically. Yet in the 1890s—a few years before the Wright brothers—several inventors had come close to creating flying machines. They could glide. Balloons could float. But actual human flight was just out of reach.

Something similar is happening today in the field of quantum computing. It seems like it might be possible to radically improve on what can be computed. After all, quantum systems exist in nature. Should it not be possible for us to build some kind of machine to harness the quantum facts of nature—and thereby vastly transform what can be constructed and what can be calculated?

The question becomes this: Are we in the Leonardo era of quantum computing, fantasizing about a mere possibility, or are we—as in the 1890s—in the antechamber of the future, where, within a few more years, and with some clever engineering, a revolution will occur in our daily lives?

—Will Hearst

Timothy Ferris is an emeritus professor at UC Berkeley and the author of a dozen books, among them Seeing in the Dark and Coming of Age in the Milky Way. He produced the Golden Record, an artifact of human music and other sounds of Earth launched aboard the twin Voyager interstellar spacecraft now exiting our solar system.